🚀 Building Production-Ready K3s Clusters: From Basics to GitOps

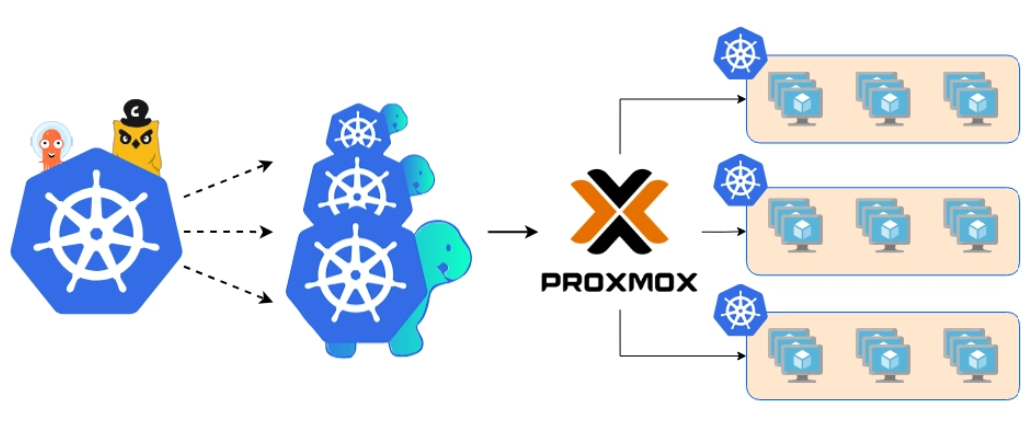

Running Kubernetes at home doesn't have to be complex or expensive. This comprehensive guide documents my journey building K3s clusters on Proxmox VE—from a simple 3-node setup to a production-grade 6-node GitOps environment.

Choose Your Version

Version 1: Foundation

3-Node K3s Cluster with Proxmox and Terraform

- ✓ 1 Master + 2 Workers

- ✓ Terraform Automation

- ✓ Basic K3s Setup

- ✓ Remote kubectl Access

Version 2: Production

6-Node GitOps Cluster with CI/CD and Storage

- ✓ 3 Control Planes + 3 Workers

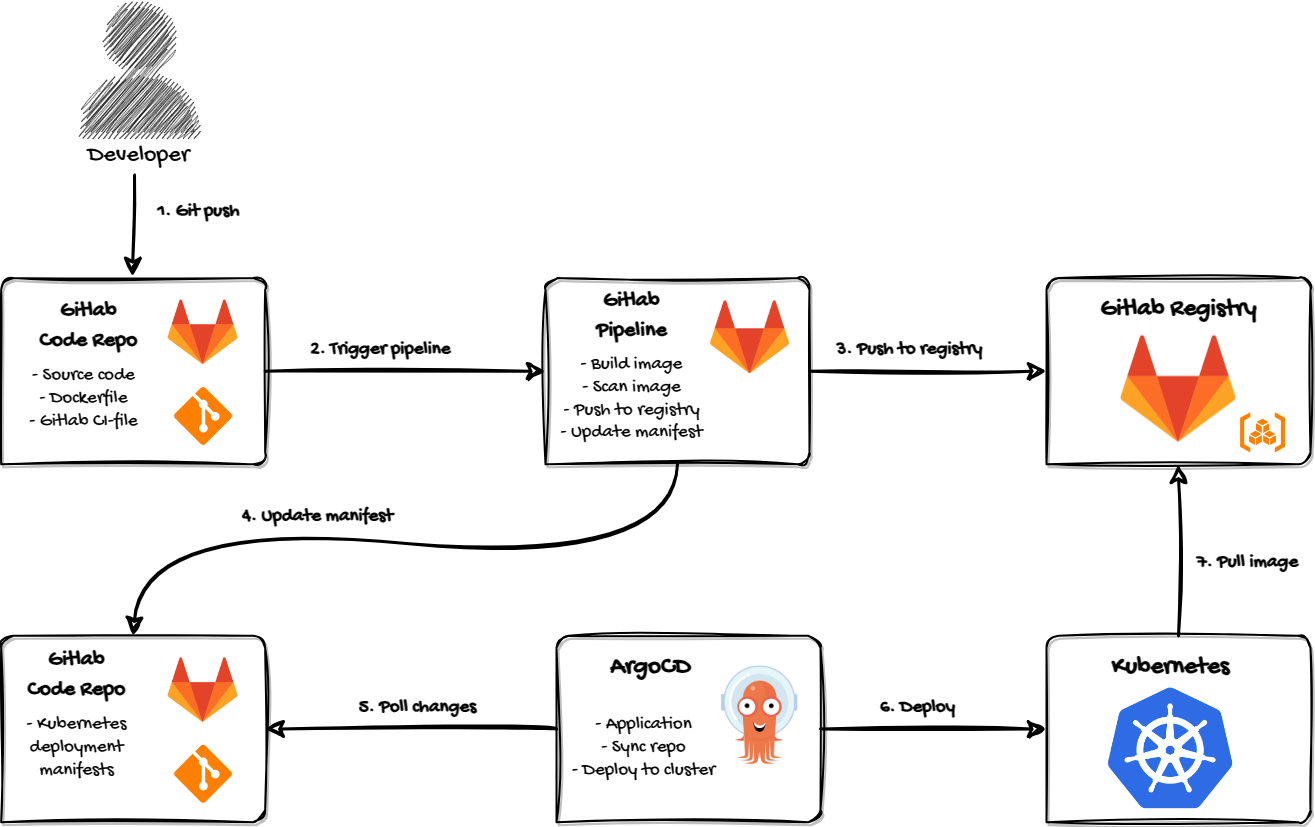

- ✓ GitLab CI/CD Pipeline

- ✓ ArgoCD GitOps

- ✓ Longhorn Distributed Storage

Version 1: Building a 3-Node K3s Cluster with Proxmox and Terraform

This is the foundation setup—perfect for learning Kubernetes basics and getting comfortable with cluster operations.

📂 GitHub Repository

Get the complete Terraform configuration, deployment scripts, and documentation

⭐ View Repository on GitHub

git clone https://github.com/ahmed86-star/k3s-homelab.git

🎯 What We're Building

A lightweight, highly available Kubernetes cluster featuring:

- 3 Proxmox nodes running virtualized infrastructure

- Automated VM deployment using Terraform

- K3s Kubernetes distribution for minimal resource usage

- 1 master + 2 worker nodes for true cluster behavior

- All managed from a single laptop running Pop!_OS

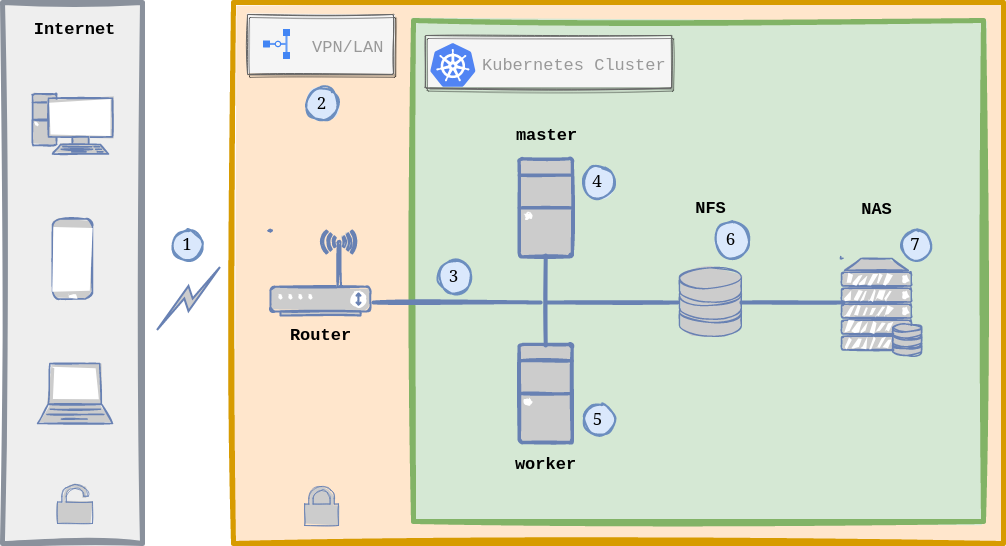

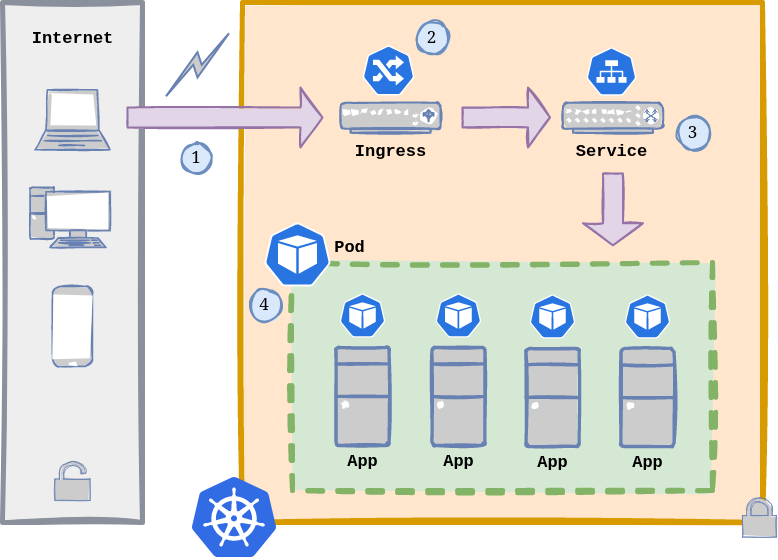

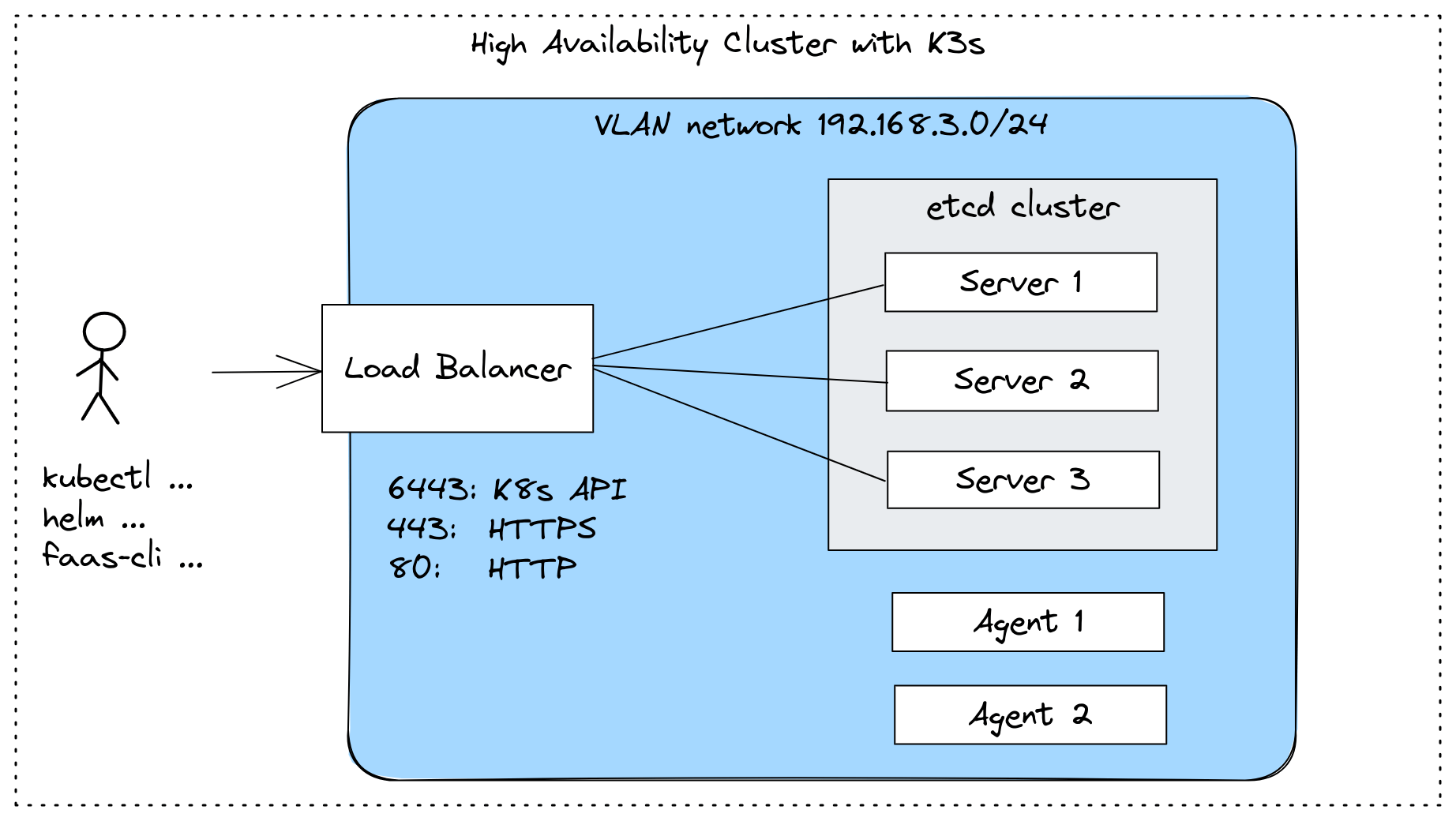

📊 Architecture Diagram

Here's a visual representation of the complete setup:

K3s Cluster Architecture - Terraform deploys to Proxmox, creating a 3-node Kubernetes cluster with 1 master and 2 worker nodes

Proxmox VE Dashboard - Running K3s cluster nodes as virtual machines in Proxmox Virtual Environment

🏗️ Architecture Overview

Physical Hardware

My setup consists of relatively modest hardware that you might already have lying around:

- 3 × Proxmox VE nodes (repurposed desktop hardware or mini PCs work great)

- 1 × Lenovo laptop running Pop!_OS (my admin machine for SSH, Terraform, and kubectl)

- 1 × Netgear GS108E switch (any managed or unmanaged gigabit switch works)

The beauty of this setup is its scalability. Start with what you have and expand as needed.

Admin Workstation

Lenovo Admin Workstation - Running Pop!_OS as the main configuration and management machine with SSH, Terraform, VSCode, and kubectl

Physical Setup

DeskPi RackMate T1-Plus Rackmount - 10-inch 8U Server Cabinet for Network, Servers, Audio and Video Equipment

.jpeg)

CAT6A Patch Cables - High-performance Ethernet cables providing reliable gigabit connectivity between network devices

Network Architecture

I'm using a simple flat network design with static IP assignments. For this blog post, I've masked the actual IPs to a documentation-friendly range:

Internal subnet: 10.10.10.0/24

| Hostname | Role | IP Address | Purpose |

|---|---|---|---|

| cluster | K3s master | 10.10.10.11 | Control plane node |

| worker1 | K3s agent | 10.10.10.12 | Worker node |

| worker3 | K3s agent | 10.10.10.13 | Worker node |

| lenovo | Admin machine | 10.10.10.20 | Management workstation |

| netgear | Switch | 10.10.10.1 | Network gateway |

Switch Port Mapping

Here's how everything connects to my Netgear GS108E:

| Port | Device | Hostname | Purpose |

|---|---|---|---|

| 1 | Proxmox Node 1 | cluster | K3s master node |

| 2 | Proxmox Node 2 | worker1 | K3s worker |

| 3 | Proxmox Node 3 | worker3 | K3s worker |

| 4 | Lenovo Pop!_OS | lenovo | Admin workstation |

| 5-8 | — | — | Available for expansion |

📋 Prerequisites

Before diving in, ensure you have:

- Three machines with Proxmox VE installed (version 7.x or 8.x)

- A management machine with SSH, Terraform, and kubectl installed

- Basic networking configured on all Proxmox nodes

- An Ubuntu 22.04 cloud image or VM template in Proxmox

- SSH key pair for passwordless authentication

🔧 Part 1: Proxmox VM Configuration

Each Kubernetes node runs as a VM on Proxmox with these specifications:

Ubuntu VM Template Specs

CPU: 2 cores (can scale up based on workload)

RAM: 4-8 GB (4GB minimum for K3s)

Disk: 20-40 GB (thin provisioned)

Boot: UEFI (required for modern Ubuntu)

Network: Bridged (vmbr0)Why these specs? K3s is incredibly lightweight compared to full Kubernetes. The master node comfortably runs on 2 cores and 4GB RAM, while workers can start even smaller and scale as needed.

Creating the Ubuntu Template

If you haven't already, create a VM template in Proxmox:

- Download Ubuntu 22.04 cloud image

- Create a VM in Proxmox

- Configure cloud-init settings

- Convert to template

This template becomes the foundation for Terraform automation.

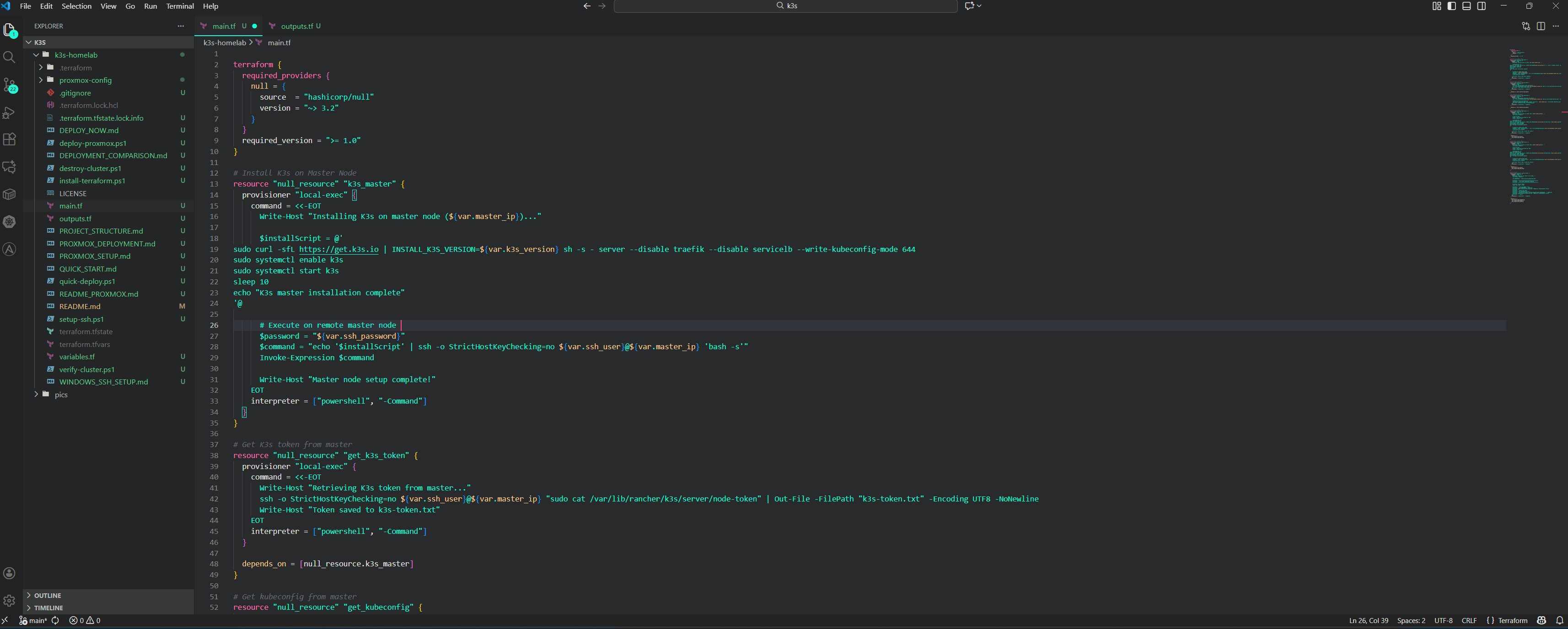

🛠️ Part 2: Terraform Automation

Infrastructure as Code makes this setup reproducible and version-controlled. Here's my Terraform structure:

terraform/

├── main.tf # Provider and main configuration

├── variables.tf # Variable definitions

├── proxmox-master.tf # Master node definition

├── proxmox-worker1.tf # Worker 1 definition

├── proxmox-worker3.tf # Worker 3 definition

└── cloud-init/ # Cloud-init configurations

├── master.yaml

├── worker1.yaml

└── worker3.yaml

Development Environment - Managing K3s infrastructure as code using VSCode on Pop!_OS with Terraform configuration files

💡 Get the Complete Code: All Terraform configurations, deployment scripts, and detailed documentation are available in the k3s-homelab repository on GitHub. Includes quick start guides, security best practices, and troubleshooting tips.

Key Terraform Variables

# variables.tf

variable "master_ip" {

default = "10.10.10.11"

}

variable "worker1_ip" {

default = "10.10.10.12"

}

variable "worker3_ip" {

default = "10.10.10.13"

}

variable "gateway" {

default = "10.10.10.1"

}

variable "vm_cpu_cores" {

default = 2

}

variable "vm_memory" {

default = 4096

}What Terraform Does

For each node, Terraform:

- Clones the Ubuntu template from Proxmox

- Injects cloud-init configuration with hostname and network settings

- Assigns a static IP address from our planned network

- Boots the VM ready for K3s installation

The beauty here is consistency. All three nodes are configured identically (except for IPs and hostnames), eliminating configuration drift.

Deploying with Terraform

# Initialize Terraform

terraform init

# Preview changes

terraform plan

# Deploy all VMs

terraform apply -auto-approveWithin minutes, you'll have three fresh Ubuntu VMs ready for Kubernetes.

🐋 Part 3: Installing K3s

K3s is a lightweight Kubernetes distribution perfect for edge computing, IoT, CI/CD, and homelabs. It's a single binary under 100MB that includes everything you need.

Step 1: Install K3s on Master Node

SSH into the master node from your admin machine:

ssh [email protected]Install K3s server (master):

curl -sfL https://get.k3s.io | sh -This single command:

- Downloads and installs K3s

- Sets up systemd services

- Configures the control plane

- Starts the Kubernetes API server

Verify installation:

sudo systemctl status k3s

sudo kubectl get nodesStep 2: Retrieve the Node Token

Worker nodes need a token to join the cluster securely. Retrieve it with:

sudo cat /var/lib/rancher/k3s/server/node-tokenCopy this token—you'll need it for the worker nodes. It looks something like:

K107c4e1b2a8f3d9e6f5a4b3c2d1e0f9a8b7c6d5e4f3a2b1c0d9e8f7a6b5c4d3::server:a1b2c3d4e5f6Step 3: Join Worker Nodes

SSH into worker1:

ssh [email protected]Install K3s agent and join the cluster:

curl -sfL https://get.k3s.io | K3S_URL="https://10.10.10.11:6443" \

K3S_TOKEN="<YOUR_TOKEN_HERE>" sh -Repeat for worker3:

ssh [email protected]

curl -sfL https://get.k3s.io | K3S_URL="https://10.10.10.11:6443" \

K3S_TOKEN="<YOUR_TOKEN_HERE>" sh -Important: Replace <YOUR_TOKEN_HERE> with the actual token from the master node.

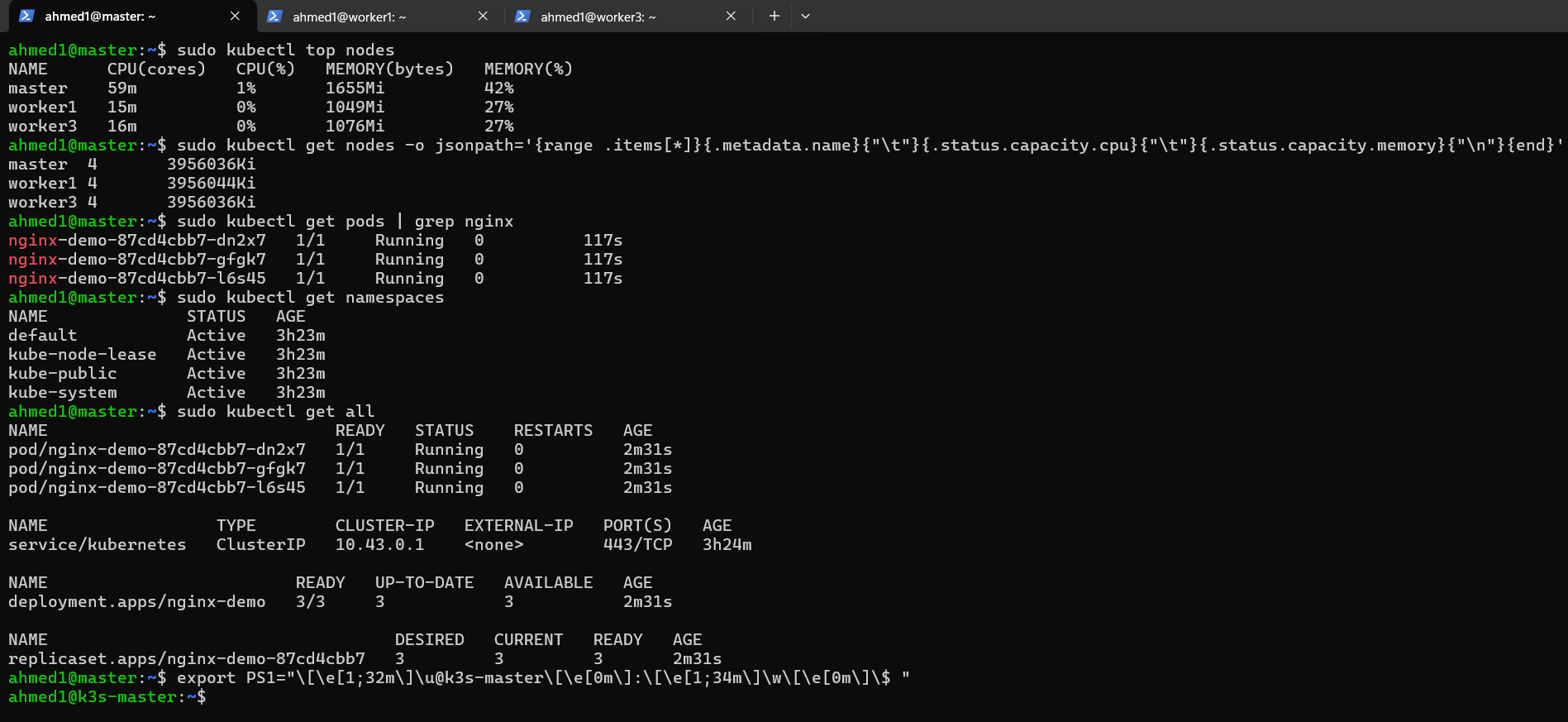

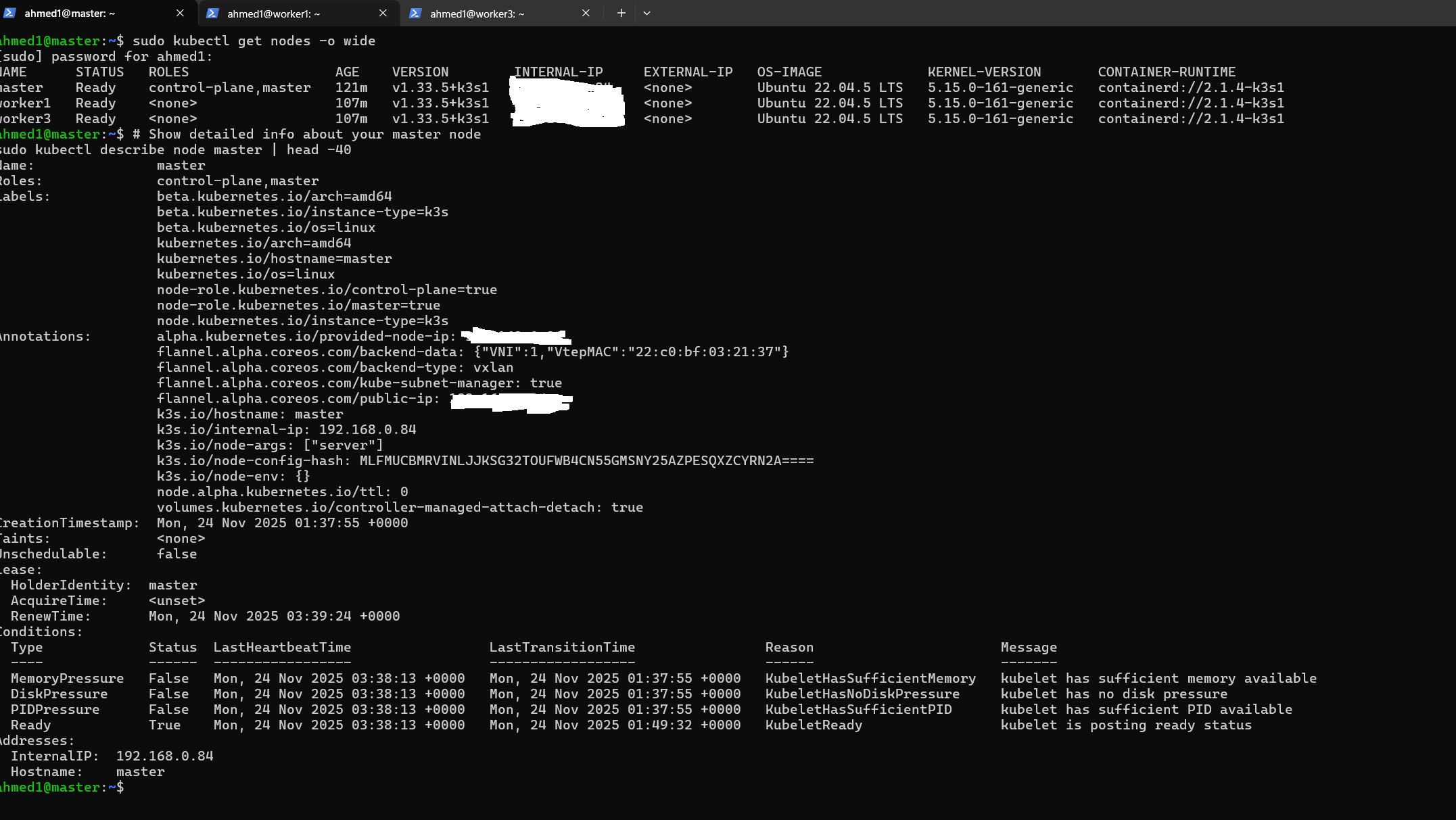

Step 4: Verify Cluster Health

Back on the master node, check that all nodes joined successfully:

sudo kubectl get nodesExpected output:

NAME STATUS ROLES AGE VERSION

cluster Ready control-plane,master 5m v1.28.x+k3s1

worker1 Ready <none> 2m v1.28.x+k3s1

worker3 Ready <none> 1m v1.28.x+k3s1All nodes should show Ready status. If a node shows NotReady, check the K3s service logs with sudo journalctl -u k3s -f (master) or sudo journalctl -u k3s-agent -f (workers).

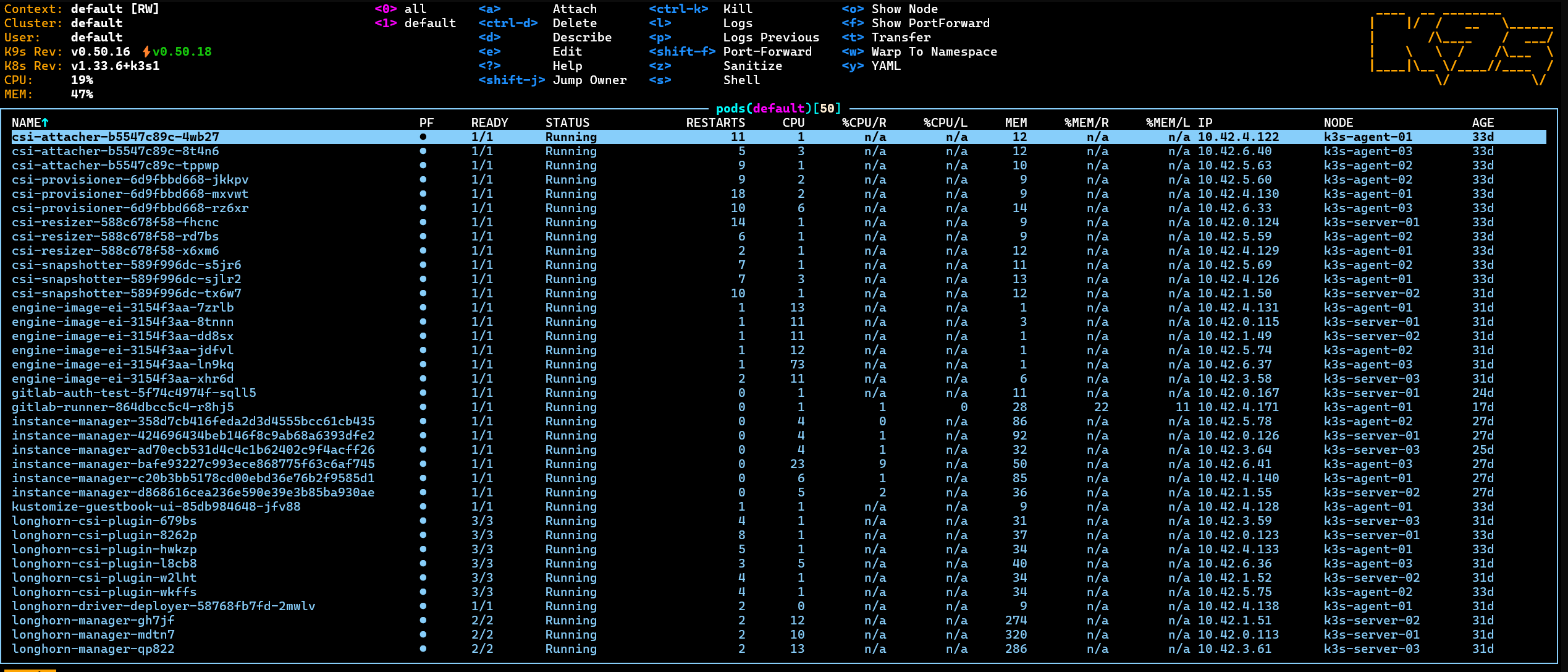

SSH Sessions from PowerShell - Connecting to K3s master and worker nodes (worker1 and worker3) for cluster management

Live 3-Node K3s Cluster - All nodes are Ready and running. You can see the nginx deployment is up and running as a test. More applications will be deployed soon!

📁 Part 4: Configuring kubectl on Your Workstation

Managing the cluster from your laptop is more convenient than SSH'ing into the master node every time. Let's set up remote access.

Copy Kubeconfig from Master

On the master node, the kubeconfig file is located at /etc/rancher/k3s/k3s.yaml. Copy it to your laptop:

# On your laptop

scp [email protected]:/etc/rancher/k3s/k3s.yaml ~/.kube/configUpdate Server Address

Edit ~/.kube/config and change the server address from 127.0.0.1 to your master's IP:

apiVersion: v1

clusters:

- cluster:

server: https://10.10.10.11:6443 # Change from 127.0.0.1Set Proper Permissions

chmod 600 ~/.kube/configTest Remote Access

kubectl get nodesYou should see all three nodes listed. Congratulations! You're now managing your homelab cluster from your workstation.

Quick Verification Commands

# Check cluster info

kubectl cluster-info

# View all pods across namespaces

kubectl get pods --all-namespaces

# Check K3s-specific components

kubectl get pods -n kube-system🎓 What You've Built

Let's recap what you now have running:

- High Availability Foundation: Three-node cluster that can survive single-node failures

- Infrastructure as Code: Reproducible VM deployment via Terraform

- Production-Like Environment: Real Kubernetes distribution (K3s) that behaves like full K8s

- Remote Management: Full kubectl access from your workstation

- Scalable Platform: Ready for deploying applications, testing, and learning

🚀 Next Steps

Now that your cluster is running, here are some ideas for what to do next:

Deploy Your First Application

kubectl create deployment nginx --image=nginx

kubectl expose deployment nginx --port=80 --type=NodePort

kubectl get servicesInstall Essential Tools

- Helm: Package manager for Kubernetes

- MetalLB: Load balancer for bare metal

- Cert-Manager: Automated TLS certificates

- Longhorn: Distributed block storage

- Traefik or Nginx Ingress: Ingress controllers

Add Monitoring

- Prometheus + Grafana: Metrics and visualization

- k9s: Terminal UI for Kubernetes

Explore GitOps

- ArgoCD or Flux: Automated deployments from Git

💡 Tips and Best Practices

Backup Your Kubeconfig: Store it securely and keep backups. Without it, you'll need to SSH into the master to manage the cluster.

Use Labels: Tag nodes with roles or capabilities for targeted pod scheduling.

Resource Limits: Always set resource requests and limits for production workloads.

Regular Updates: Keep K3s updated with sudo systemctl restart k3s (master) and sudo systemctl restart k3s-agent (workers) after upgrading.

Snapshot VMs: Take Proxmox snapshots before major changes. They're lifesavers when experiments go wrong.

🐛 Troubleshooting Common Issues

Node Shows NotReady: Check network connectivity and ensure K3s service is running.

Pods Stuck in Pending: Likely resource constraints. Check with kubectl describe pod <pod-name>.

Can't Connect from Workstation: Verify firewall rules allow port 6443 and check kubeconfig server address.

Worker Won't Join: Double-check the token and master URL. Token may have expired if master was reinstalled.

🎉 Conclusion

You now have a fully functional Kubernetes homelab that rivals many production setups. This cluster serves as an excellent learning platform and testing ground for containerized applications.

The combination of Proxmox for virtualization, Terraform for automation, and K3s for Kubernetes gives you a powerful, reproducible infrastructure that you can tear down and rebuild at will.

What will you deploy on your cluster first? Let me know in the comments below!

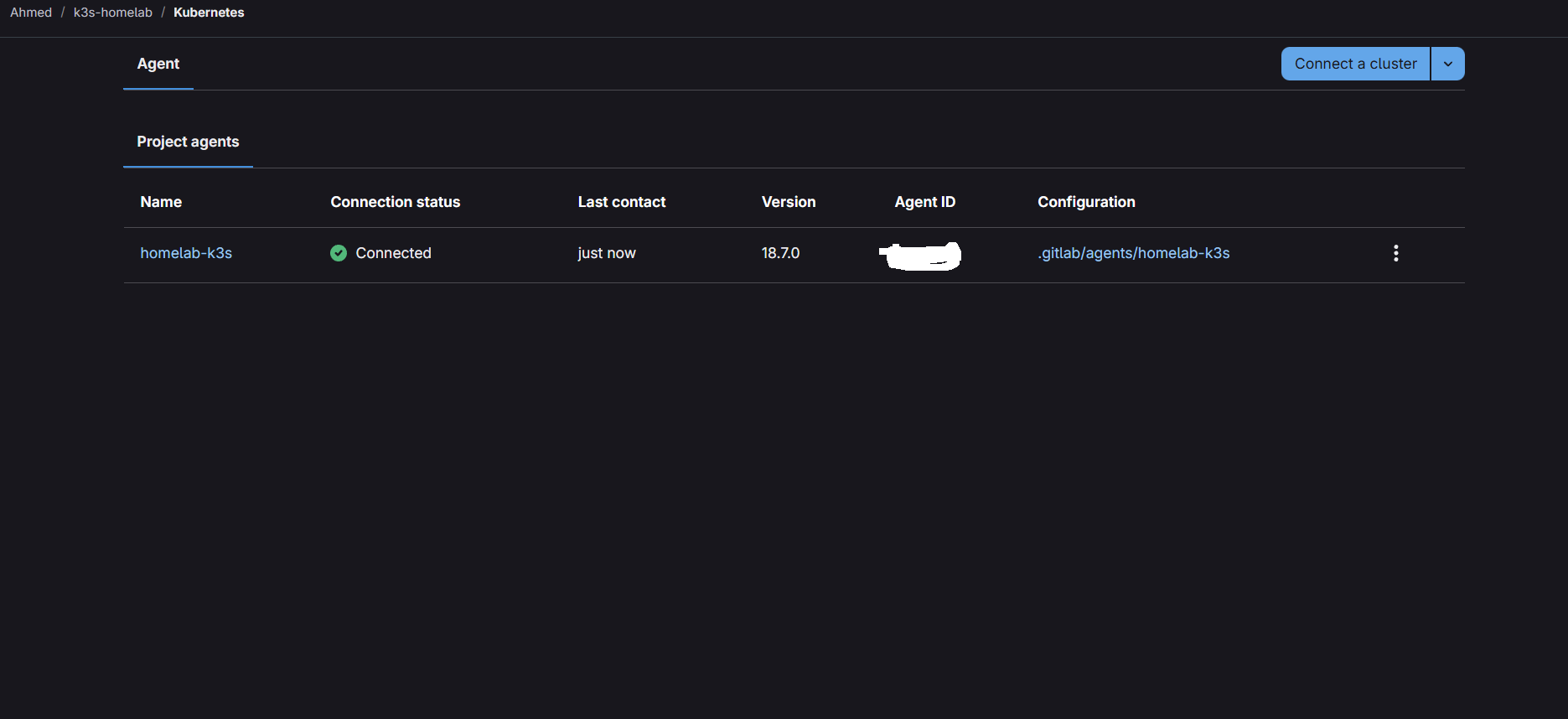

🚀 Deploy Your Own K3s Cluster

Ready to build your own Kubernetes homelab? Access my private GitLab repo here:

💬 Comments & Claps

← Back to Blog